Four Machines.

Four Missions.

From palm-sized AI nodes to discrete-GPU workstations, every machine in the lineup is purpose-built for local AI, edge compute, and sovereign data processing. No cloud required.

We can ship any of these devices to you with OpenClaw pre-installed.

Hala Nexus(STHT1)

Up to 128 GB of unified LPDDR5 memory lets you run 235-billion-parameter models locally. The definitive flagship with AMD Strix Halo AI 395.

CPU

Strix Halo AI 395 (16C)

Memory

128 GB LPDDR5

AI / NPU

80 TOPS NPU

GPU

40CU RDNA 3.5

Free shipping included

Available with OpenClaw pre-installed — we can send it to you ready to run.

Hala Pulse(STHT1)

The same 16-core Strix Halo power as the Nexus, in a sleek 127mm metal chassis. 128GB RAM for unrestricted local AI compute.

CPU

Strix Halo AI 395 (16C)

Memory

128 GB LPDDR5

AI / NPU

80 TOPS NPU

Volume

1.3L (127×127mm)

Free shipping included

Available with OpenClaw pre-installed — we can send it to you ready to run.

Hala Apex(STHT1)

A high-authority AI workstation with 12-core Strix Halo AI 385. Professional-grade performance in a compact, mesh-cooled chassis.

CPU

Strix Halo AI 385 (12C)

Memory

Up to 64 GB DDR5

AI / NPU

80 TOPS NPU

GPU

32CU RDNA 3.5

Free shipping included

Available with OpenClaw pre-installed — we can send it to you ready to run.

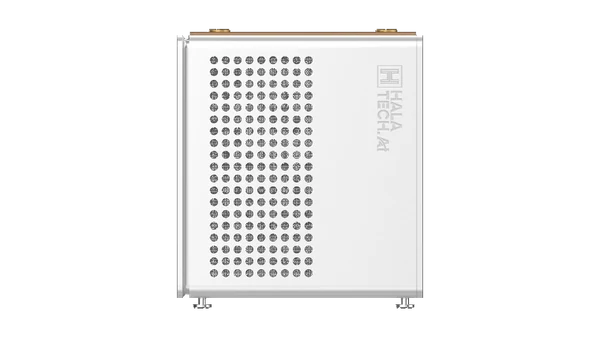

Hala Core(STHT1)

Secure, local AI for every desk. AMD Ryzen 7 7840 power in a striking vertical mesh tower. The gateway to AI sovereignty.

CPU

AMD Ryzen 7 7840 (8C)

Memory

32 GB LPDDR5

AI / NPU

16 TOPS NPU

GPU

12CU RDNA Graphics

Free shipping included

Available with OpenClaw pre-installed — we can send it to you ready to run.

Your Device Runs Open CLAW.

AI Agents, Fully Offline.

Open CLAW is the open-source AI agent framework built for local hardware. Pair it with any Hala machine and you get autonomous agents that write code, conduct research, and complete complex workflows — without a single API call leaving your building. We can send any of our devices to you with OpenClaw pre-installed.

Autonomous Coding Agents

Let Open CLAW write, review, and ship code without touching a remote API. Full IDE-level agents running on your own silicon.

Multi-Agent Orchestration

Chain specialised agents — researcher, planner, executor — into pipelines that tackle complex, long-horizon tasks end to end.

Total Data Sovereignty

Every prompt and every output stays on your device. No telemetry. No cloud handoff. Built for regulated industries and sensitive work.

No Limits. No Subscriptions.

No API rate caps. No token quotas. No monthly bills. Run agents at full throughput, 24/7 — powered entirely by your Hala hardware.

One Ecosystem, Zero Overlap

Each machine serves a distinct purpose. Together they cover every deployment scenario from desk to data centre.

Every Workload Covered

From 128 GB unified memory for massive LLMs to a discrete GPU for real-time rendering — there's a machine for every job.

0.8L to 6L — Pick Your Size

The AKB56 fits in your palm. The XB35 houses a full desktop GPU. Choose the form factor that matches your deployment.

Whisper-Quiet to Triple-Fan

35W silent mode on the xN88, vapour chamber on the AKB56, triple-fan tower on the XB35. Thermal design matched to workload.

External or Internal Power

Compact adapters for the portable units, a built-in 336W ATX supply for the XB35. No compromises on power delivery.

At a Glance

Side-by-side, so you can pick the right machine in seconds.

| Spec | Hala Nexus(STHT1)The AI Inference King | Hala Pulse(STHT1)Ultra-Compact Powerhouse | Hala Apex(STHT1)The High-Performance Box | Hala Core(STHT1)The Entry-Level AI Node |

|---|---|---|---|---|

| Best For | Massive LLM Inference | Flagship Portability | High-Performance AI Work | Office & Edge AI |

| CPU | Ryzen 7 7840 (8C) | |||

| NPU / AI | 80 TOPS | 80 TOPS | 80 TOPS | 16 TOPS |

| GPU | 40CU RDNA 3.5 | 40CU RDNA 3.5 | 32CU RDNA 3.5 | 12CU RDNA |

| Max Memory | 128 GB LPDDR5 | 128 GB LPDDR5 | 64 GB DDR5 | 32 GB LPDDR5 |

| Volume | ~2 L | ~1.3 L | ~2.5 L | ~4 L |

| Power | 240W external | 230W external | 240W external | 120W external |

| Starting At | Inquire | Inquire | Inquire | Inquire |

All models ship with Windows 11 · 2× M.2 NVMe slots · WiFi · Bluetooth · 2.5G+ Ethernet