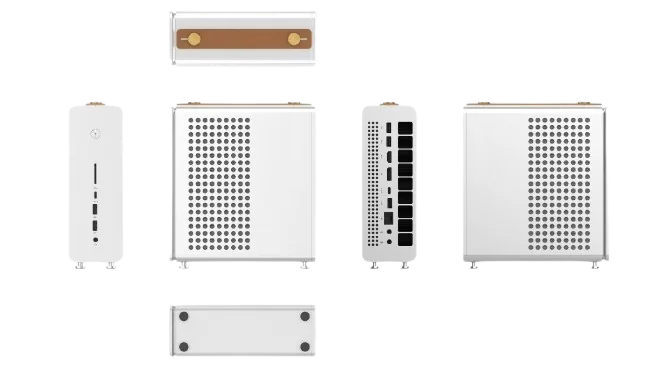

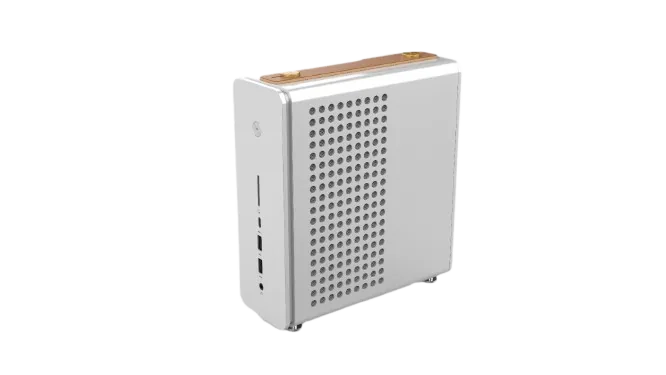

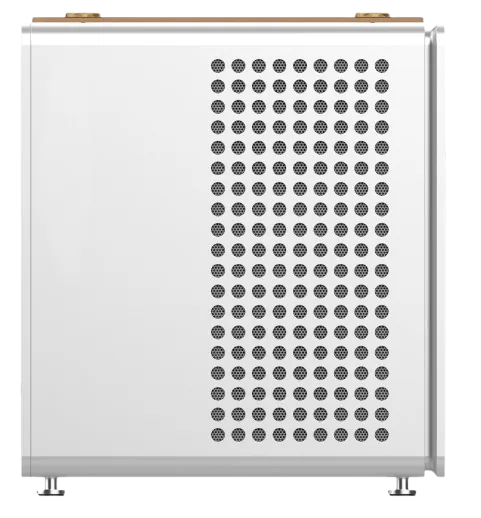

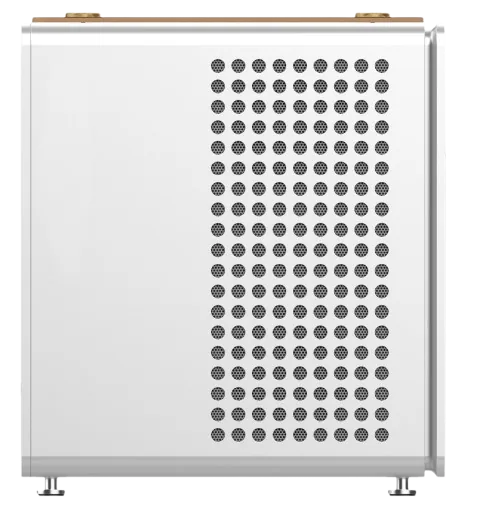

Hala Nexus (STHT1)

Revolutionizing Business with AI Desktops

AI Desktop Workstation powered by AMD Ryzen AI Strix Halo. Run massive LLMs locally with up to 235B parameters. Unprecedented on-device AI performance.

Powerhouse Features

Everything for personal workstations to team deployments.

50 TOPS NPU

50 TOPS NPU dedicated AI processing. Run any LLM locally with zero latency.

RDNA 3.5 Integrated Graphics

AMD integrated graphics with up to 96GB shared memory. Discrete-level performance for AI and creative workflows.

Dual M.2 NVMe Storage

2× M.2 2280 PCIe×4 SSD. SD4.0 card reader for SDXC. Expand for datasets and model caching.

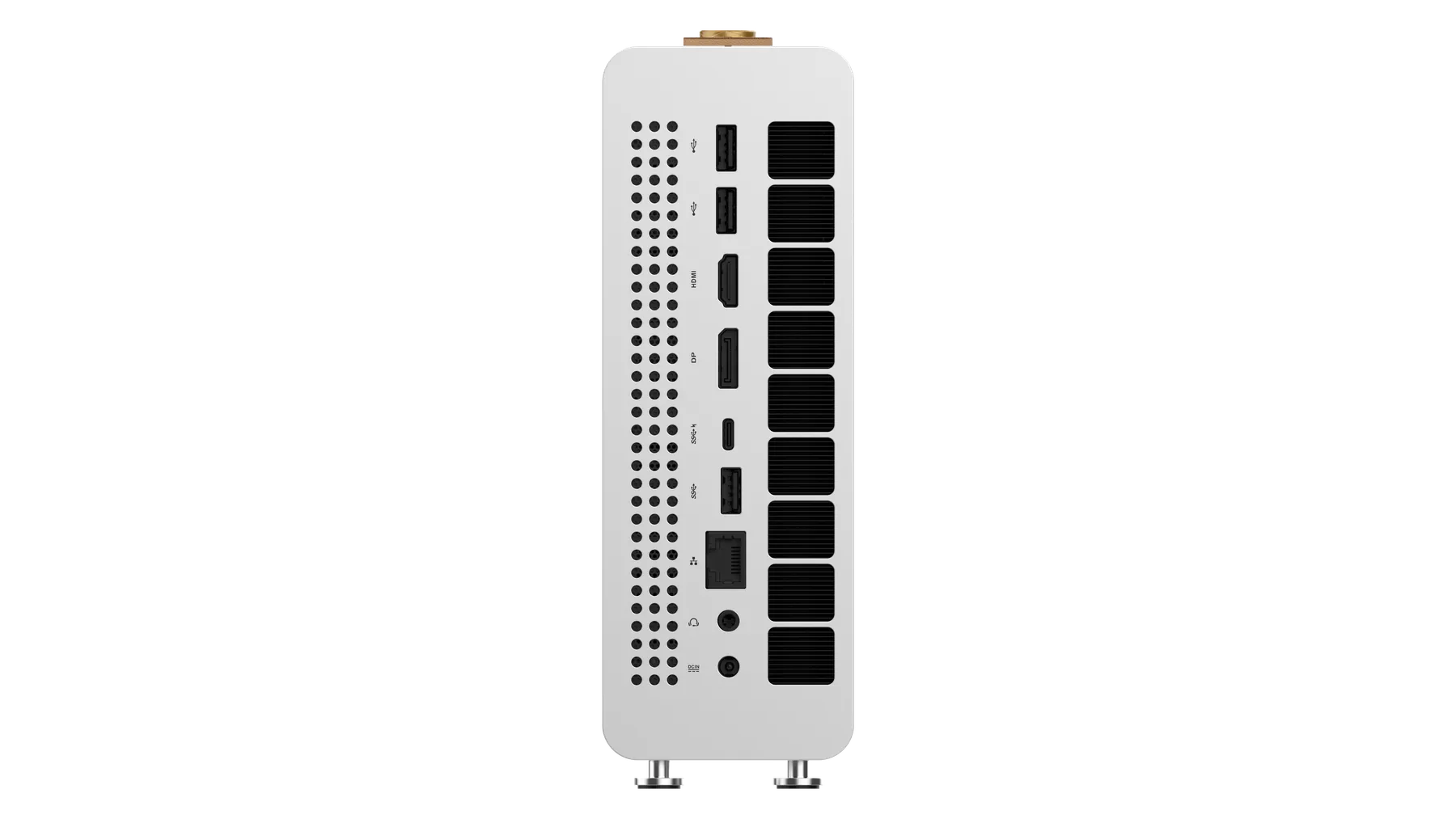

Industrial Connectivity

WiFi 7 (802.11be), Bluetooth 5.0+, 2.5G Ethernet. Deploy anywhere, connect reliably.

A New Class Of AI

Desktop

H13 combines high-power CPU cores, RDNA 3.5 graphics, and an industry-leading NPU into a single compact system. It is designed for developers, teams, and enterprises that need local, scalable, high-performance AI compute without relying on cloud infrastructure.

🚀Unprecedented AI Acceleration

- 50 TOPS NPU — industry leading

- Optimized for AI inference, Copilot+ PC, and LLM workloads

- High power range (120W / 132W) for sustained performance

🖥Discrete-Level Graphics Performance

- Built-in RDNA 3.5 graphics

- Up to 2.5× graphics performance vs Ryzen™ AI 300 Series

- Competitive with RTX 4070 mobile (up to 85W)

- Outperforms RTX 4060 mobile across workloads

🧠Massive Unified Memory

- Up to 128GB LPDDR5 (8× 8000MHz)

- Allocate up to 96GB as variable graphics memory

- Ideal for large AI models and high-resolution workloads

Endless Possibilities With The Ultras Powered H13

Edge AI Inference

Run AI models locally for real-time processing without cloud dependency

Local LLM Deployment

Deploy large language models on-premises for privacy and performance

AI-Powered Search

Search and retrieve information instantly with semantic capabilities

3D Rendering & Content

High-performance graphics processing for visualization and creation

Built For All, Built

For Everyone

From personal to enterprise, one system for all, RDNA 3.5 graphics, and an industry-leading NPU into a single compact system. Designed for developers, teams, and enterprises that need local, scalable, high-performance AI compute without relying on cloud infrastructure.

AI Research

Experiment with large models, fine-tune locally, no vendor dependency.

- LLM Training

- Model Research

- Local Deployment

Creator Studio

Video editing, 3D rendering, and AI-assisted production in one box.

- Video Editing

- 3D Rendering

- VFX & AI

Gaming & Content

Local LLM AI, 2.5X graphics performance, and real-time content generation.

- AAA Gaming

- AI-Generated Content

- 3D Modeling

Enterprise

Scalable clusters for regulated industries and high-security environments.

- Data Sovereign

- Multi-Unit Scale

- Zero Trust

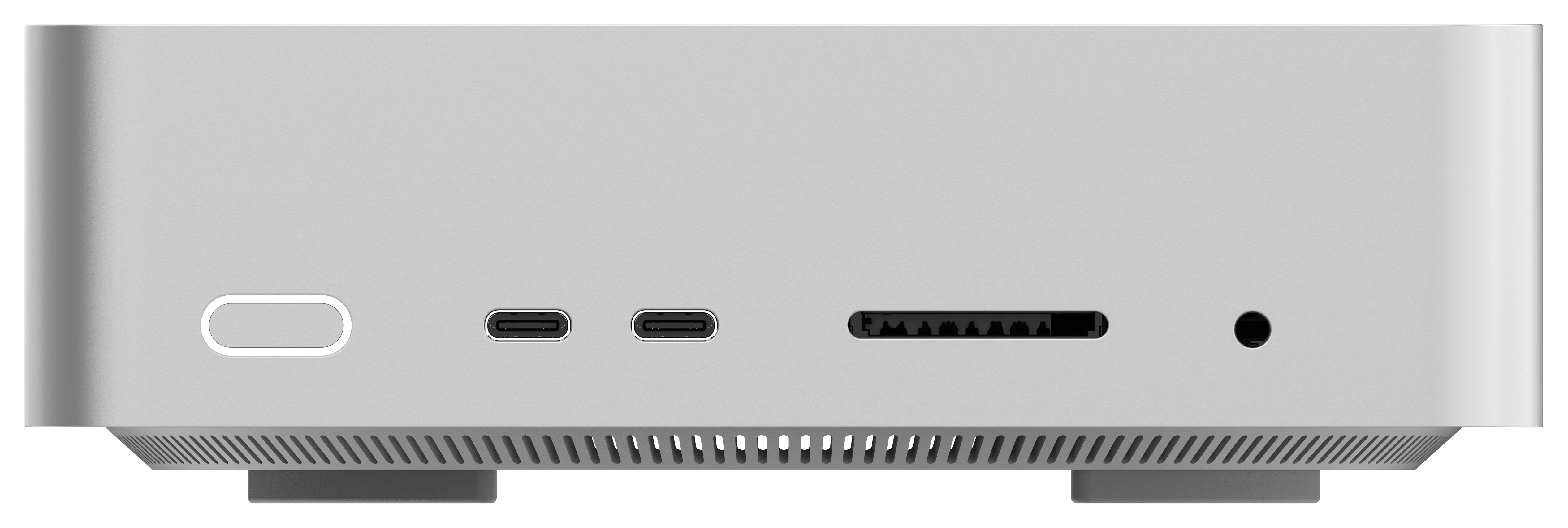

Key Specifications

See what innovators are building

AI Acceleration

50 TOPS

Dedicated NPU engine for edge AI inference. Run 235B parameter models locally at 13.4 tokens per second.

Model Compatibility: Qwen3, Llama, DeepSeek, and any OSS LLM

Memory

Up to 128GB

LPDDR5 @ 8000MHz

Graphics

RDNA 3.5

RTX 4070+ performance

Storage

2× M.2 2280

PCIe×4 NVMe SSD

Processor

AMD Ryzen AI

Strix Halo · 8-core

Networking

1× 2.5G LAN

WiFi 7 · Bluetooth 5+ · USB4

Power

240W

20V/12A external adapter

Ready To Experience AI Dominance?

Check More Products

Tools and strategies modern teams need to help their companies grow.

Hala Pulse(STHT1)

Ultra-compact 1L AI workstation with 16-core Strix Halo and Oculink eGPU expansion.

Hala Core(STHT1)

Apple-compete mini PC with USB4 V2 80Gbps, 10G LAN, and built-in speakers in a 127mm metal chassis.

Hala Apex(STHT1)

Discrete RX 7600 XT 16GB mini PC with AMD Hawk Point CPU, triple-fan cooling, and 336W internal ATX PSU.

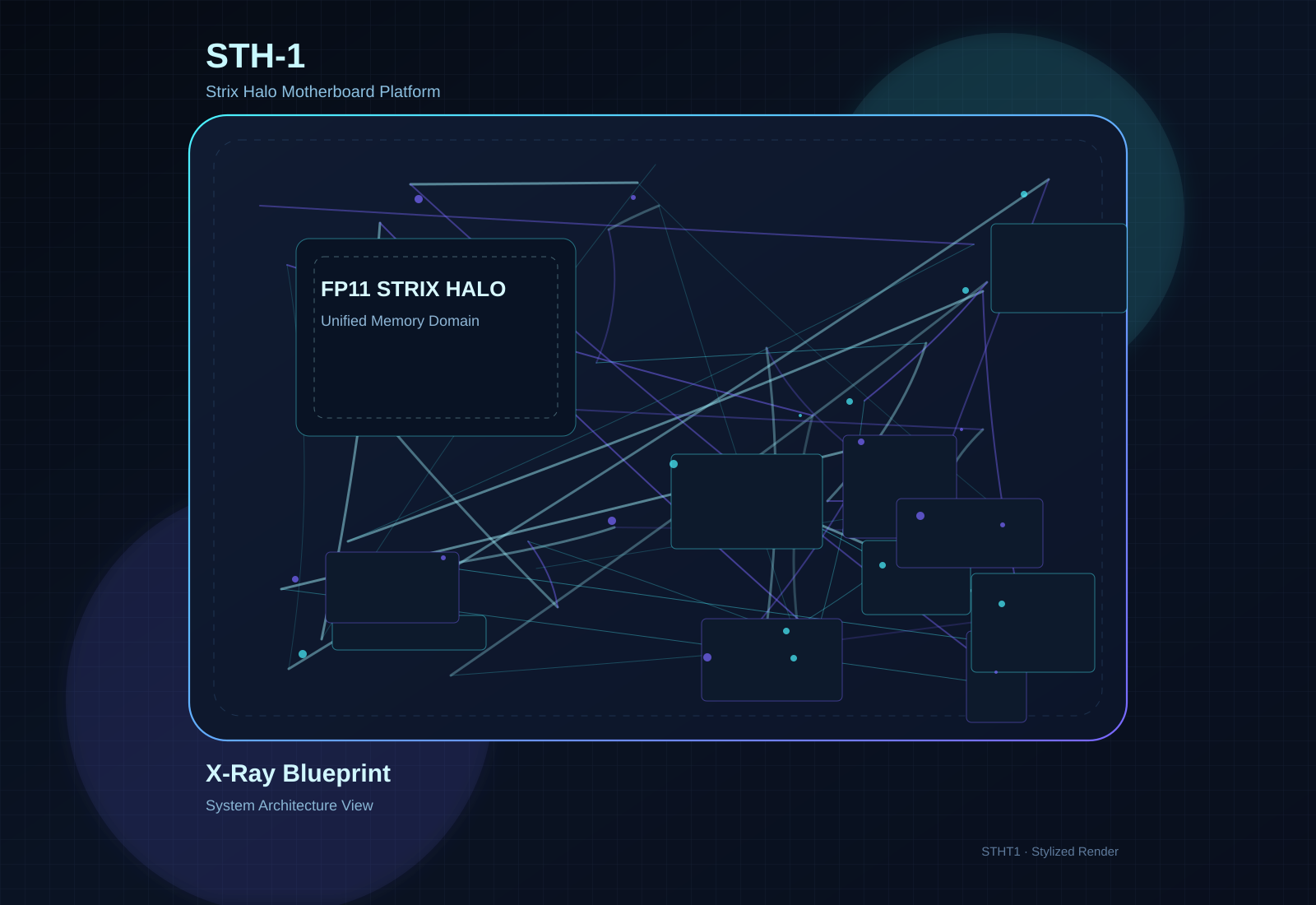

Motherboard STH-1

Thin Mini-ITX Strix Halo motherboard with up to 128GB LPDDR5X, dual M.2, and dual HDMI 2.1.

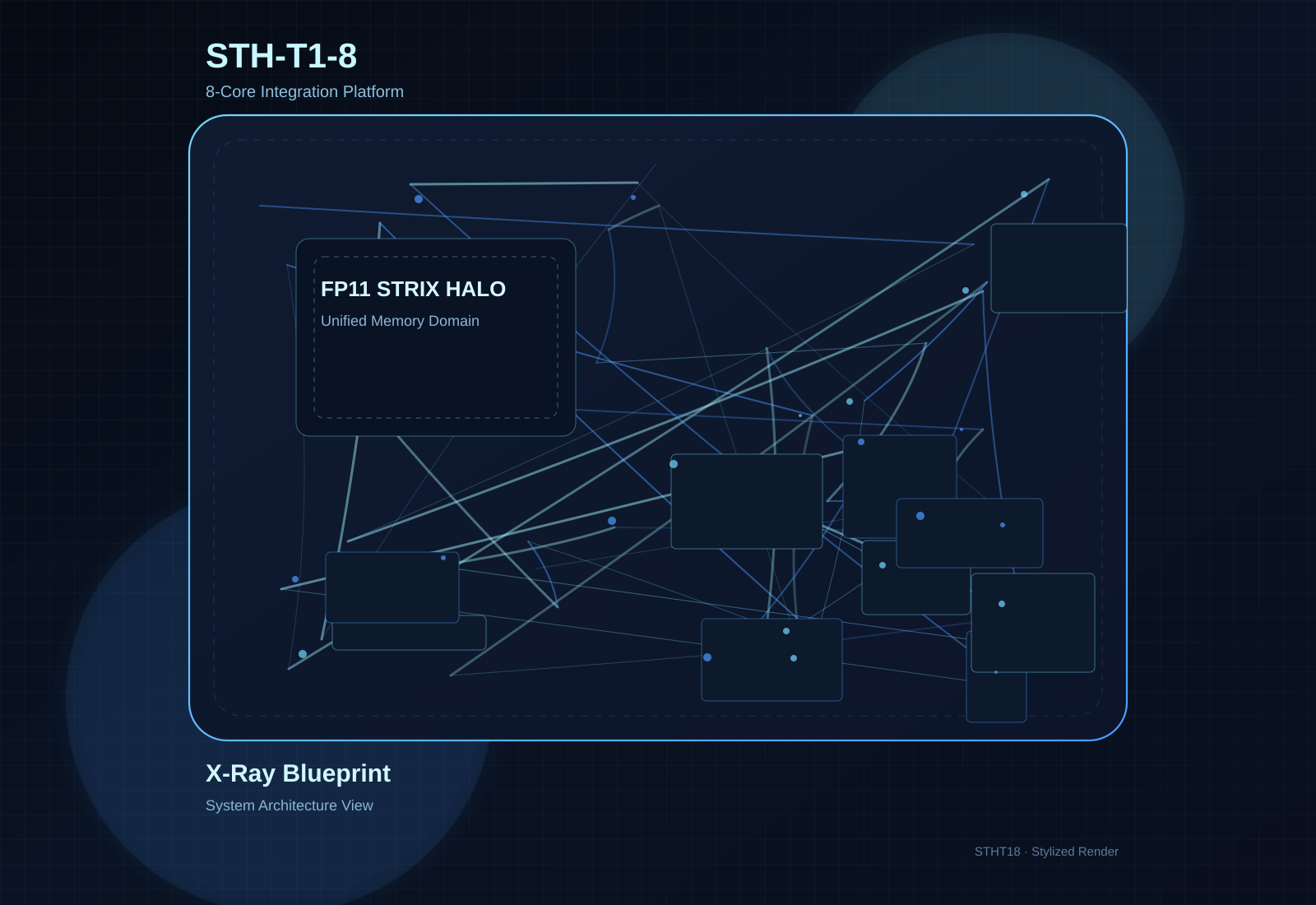

Motherboard STH-T1-8

8-core Strix Halo motherboard configuration with 128GB LPDDR5X, dual M.2 PCIe 4.0 x4, and 19V DC-in.